Introduction

At Azurro, we consistently place importance on using the Open Source technologies, both while working on the projects and in our everyday lives. We have decided to share a base language model trained by us. We are confident that smaller language models have great potential, and direct access to them for all people that are interested in such models democratizes this significant and dynamically changing field even more.

Statements

Training large language models requires a lot of computing power and it is meant for the major players on the market. However, does it mean that individuals or small companies cannot train language models capable of performing specific tasks? We decided to answer this question and train our own language model from scratch.

We have made the following statements:

- – we use 1 consumer graphic card

- – we train the model only with the Polish corpus

- – we use manually selected, high quality texts for training the model.

Why have we made such statements?

It is worth noting that training a model requires several times more resources than using it. To put it simply, it can be assumed that it is about 3-4 times more. Therefore, if a model can be run with a graphic card that has 6 GB VRAM, then training this model requires about 24 GB VRAM (this is the minimum value).

Many consumer computers are equipped with good quality graphic cards that can be used for training a model at one’s own home. This is why we have decided to use a top consumer graphic card – Nvidia’s RTX 4090 24GB VRAM.

All the currently available language models have been trained mainly with English corpora with a little bit of other languages, including Polish. The effect is that these models are not the best at dealing with the Polish texts. Even the popular GPT models from OpenAI and Bard from Google often have issues with correct forms. Therefore we have decided to prepare a model based only on the Polish corpus. An additional advantage of using only the Polish corpus is the size of the model – it is better to focus on one language in the case of smaller models.

It is important to remember that models are only as good as the data with which they are trained. Given the small size of the model, we trained it with carefully selected texts. This is why we have not used corpora such as Common Crawl that contain a lot of poor-quality data. With close collaboration and advice from the Speakleash team, our team has prepared over 285GB of Polish language text corpus that has then been processed and used for training the model. Additionally, the unique feature of our model is that it has been trained on the largest amount of text among all available models for the Polish language.

Model – technical information

APT3-1B-Base has been trained with the use of an original open source framework called ALLaMo. This framework allows the user to train language models similar to the Meta AI’s LLaMA models quickly and efficiently.

APT3-1B-Base is an autoregressive language model based on the architecture of a transformer. It has been trained with data collected before the end of December 2023.

There is also an instruct fine-tuned version of this model (APT3-1B-Instruct-v1) available on HuggingFace.

The training dataset (the Polish corpus) has over 60 billion tokens, and we use all of them for training with one epoch.

A special tokenizer has been prepared and trained for the purpose of training the models in the APT3 series.

Model description:

- – developed by: Azurro

- – language: Polish

- – model type: causal decoder-only

- – license: CC BY NC 4.0 (non-commercial use)

- – available at: HuggingFace

Model details:

| Hyperparameter | Value |

|---|---|

| Model Parameters | 1041M |

| Sequence Length | 2048 |

| Vocabulary Size | 31980 |

| Layers | 18 |

| Heads | 32 |

| d_head | 64 |

| d_model | 1024 |

| Dropout | 0.0 |

| Bias | No |

| Positional Encoding | RoPE |

| Activation Function | SwiGLU |

| Normalizing Function | RMSNorm |

| Intermediate Size | 5504 |

| Norm Epsilon | 1e-06 |

Tokenizer details:

- – type: BPE

- – special tokens: 8

- – alphabet size: 113

- – vocabulary size: 31980

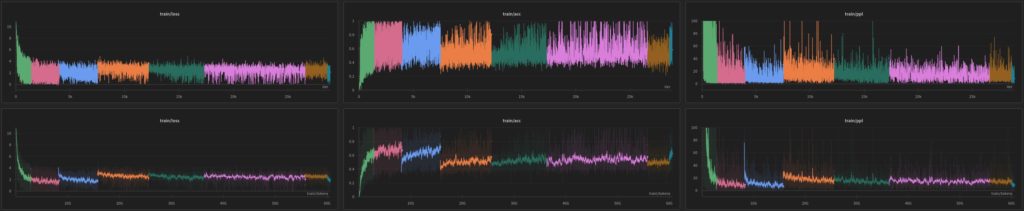

Training:

Training hyperparameters:

| Hyperparameter | Value |

|---|---|

| Micro Batch Size | 1 |

| Gradient Accumulation Steps | 1024 |

| Batch Size | 2097152 |

| Learning Rate (cosine) | 2e-04 -> 2e-05 |

| Warmup Iterations | 1000 |

| All Iterations | 28900 |

| Optimizer | AdamW |

| β1, β2 | 0.9, 0.95 |

| Adam_eps | 1e−8 |

| Weight Decay | 0.1 |

| Grad Clip | 1.0 |

| Precision | bfloat16 |

Dataset

Collecting a large amount of high quality training data is a great challenge. Over the past years at Azurro, we have done a lot of projects connected with processing Big Data. Therefore, with our extensive experience, we have been able to prepare carefully selected training dataset quickly and efficiently.

Our close collaboration with the Speakleash team has resulted in the creation of over 285GB of the Polish language text corpus. The process of preparing the training dataset involved transforming documents by applying various cleaning and repairing rules, followed by selecting documents of appropriate quality.

Our training dataset contains:

- – 150 datasets from Speakleash – 93%

- – other publicly available and crawled web data – 6%

- – Polish Wikipedia – 1%

Quickstart

This model can be easily loaded using the AutoModelForCausalLM functionality.

from transformers import AutoTokenizer, AutoModelForCausalLM

model_name = „Azurro/APT3-1B-Base”

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(model_name)

In order to reduce the memory usage, you can use smaller precision (bfloat16).

import torch

model = AutoModelForCausalLM.from_pretrained(model_name, torch_dtype=torch.bfloat16)

And then you can use Hugging Face Pipelines to generate text:

import transformers

text = „Najważniejszym celem człowieka na ziemi jest”

pipeline = transformers.pipeline(„text-generation”, model=model, tokenizer=tokenizer)

sequences = pipeline(max_new_tokens=100, do_sample=True, top_k=50, eos_token_id=tokenizer.eos_token_id, text_inputs=text)

for seq in sequences:

print(f”Result: {seq[’generated_text’]}”)

Generated output:

„Najważniejszym celem człowieka na ziemi jest życie w pokoju, harmonii i miłości. Dla każdego z nas bardzo ważne jest, aby otaczać się kochanymi osobami.”

Limitations and Biases

APT3-1B-Base is not intended for deployment without fine-tuning. It should not be used for human-facing interactions without further guardrails and user consent.

APT3-1B-Base can produce factually incorrect output, and should not be relied on to produce factually accurate information. APT3-1B-Base was trained on various public datasets. While great efforts have been taken to clean the pretraining data, it is possible that this model could generate lewd, biased or otherwise offensive outputs.

License

Because of an unclear legal situation, we have decided to publish the model under CC BY NC 4.0 license – it allows for non-commercial use. The model can be used for scientific purposes and privately, as long as the license conditions are met.

Disclaimer

The license on this model does not constitute legal advice. We are not responsible for the actions of third parties who use this model.

Citation

Please cite this model using the following format:

@online{AzurroAPT3Base1B,

author = {Krzysztof Ociepa, Azurro},

title = {Introducing APT3-1B-Base: Polish Language Model},

year = {2024},

url = {www.azurro.pl/apt3-1b-base-en},

note = {Accessed: 2024-01-04}, % change this date

urldate = {2024-01-04} % change this date

}

Special thanks

We would like to especially thank the Speakleash team for collecting and sharing texts in Polish, and for the support we could always count on while preparing the training set for our model. Without you, it would not have been possible to train this model. Thank you!

The Azurro Team

Please find more information on our homepage.

Contact Us

If you have any questions or suggestions, please drop an email to [email protected].